Discover how finance teams can use Looker Conversational Analytics to monitor receivables, identify exposure risks, and accelerate AR decision-making.

Finance

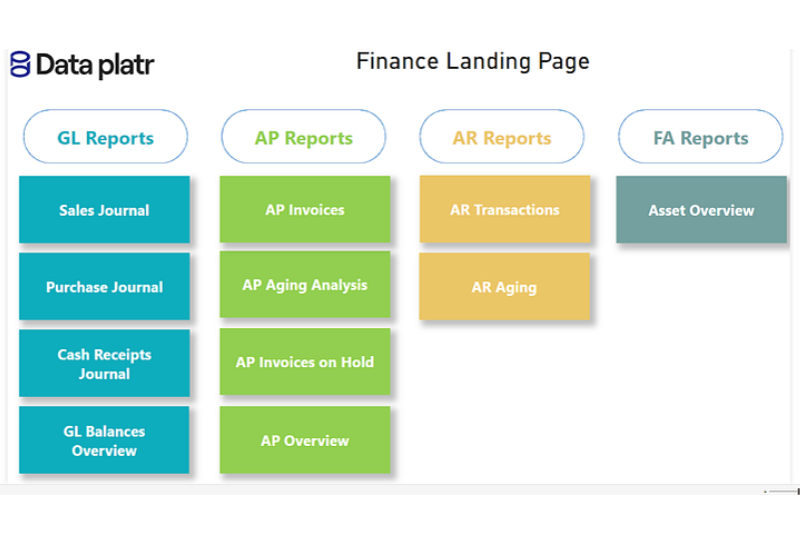

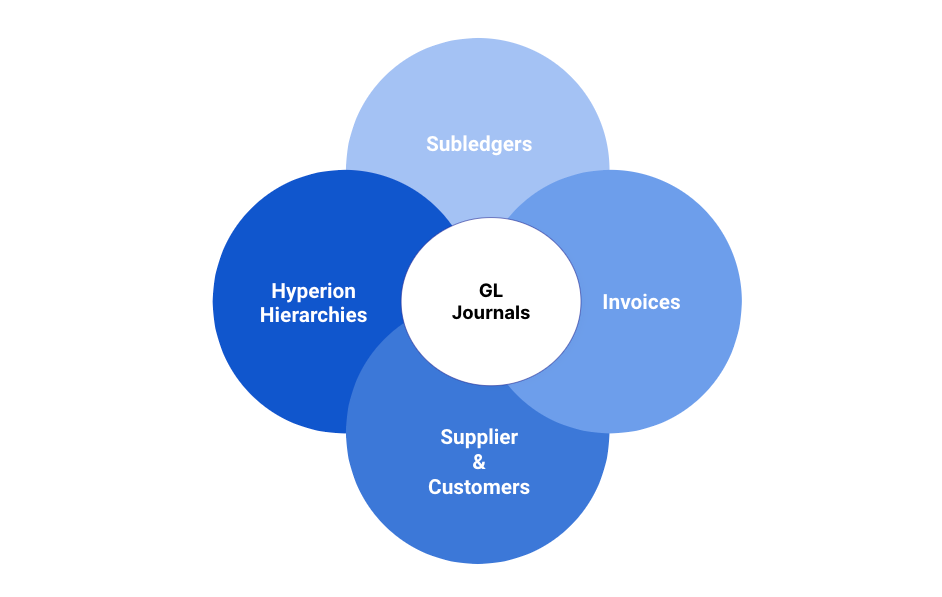

Oracle EBS General Ledger Modernization with Databricks

Learn how Databricks Lakehouse modernizes Oracle EBS General Ledger reporting with pipelines, data ingestion and high-performance financial analytics.

Similar Blogs

-

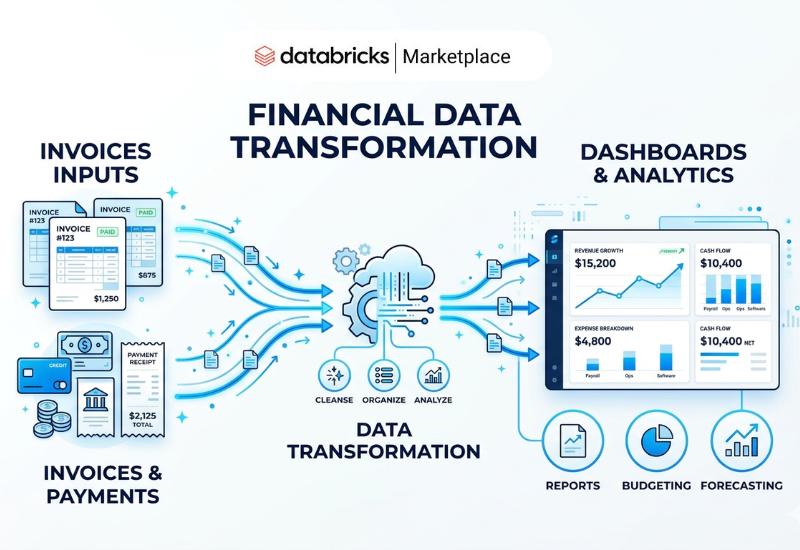

Finance Accounts Receivable Analytics & Cash Flow Optimization

Optimize Accounts Receivable Analytics with Databricks. Gain faster insights into aging, receipts, and cash flow with governed, scalable data pipelines.

-

Finance Advanced General Ledger Analytics with Databricks & Delta Lake

Build a finance-grade General Ledger Analytics platform with Databricks. Deliver consistent KPIs, faster reporting, and enhanced audit traceability.

-

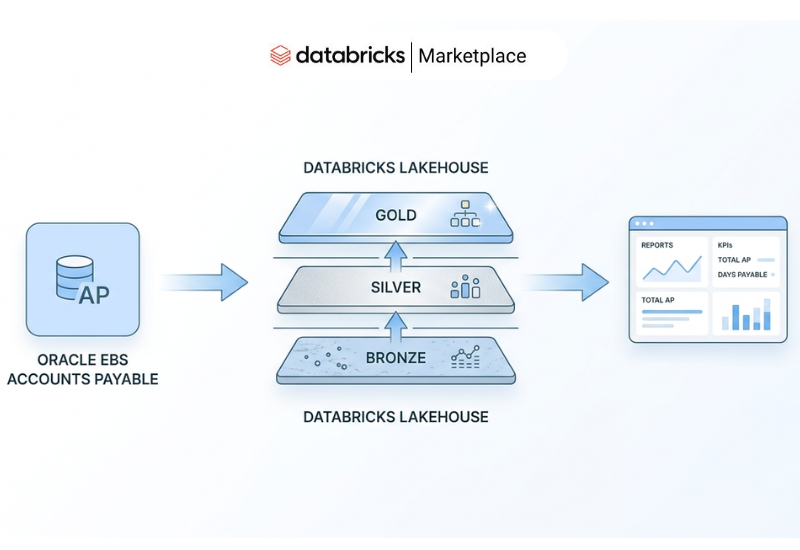

Finance Oracle EBS Accounts Payable with Databricks Lakehouse

Modernize Oracle EBS Accounts Payable with Databricks Lakehouse. Enable real-time insights, scalable AP analytics, automated pipelines, and financial data.

-

Finance Oracle EBS General Ledger Modernization with Databricks

Learn how Databricks Lakehouse modernizes Oracle EBS General Ledger reporting with pipelines, data ingestion and high-performance financial analytics.

-

Finance Operational Finance Analytics with Sigma Workflow and Alerts

Dataplatr helps finance teams operationalize analytics using Sigma Workflow and Alerts by helping with automation and real-time actions into dashboards.

-

Finance Close the Budget & Analytics Gap with Sigma Write Back

Eliminate manual budget uploads and IT delays. See how Sigma Input Tables enable real-time Sigma Write Back and faster finance decisions.

-

Finance Build Interactive Sigma Dashboard with Embedded Actions

Learn how an interactive Sigma dashboard uses embedded actions, filters, and drill-downs to deliver faster, self-service analytics.

-

Finance Financial Analytics: Unlock Accounts Receivable KPIs and Dashboard for actionable insights

Master Accounts Receivable KPIs with comprehensive dashboards. Track AR aging, invoice amounts, payment schedules, and cash receipts for data-driven decisions.

-

Finance Oracle EBS to GCP : Accounts Payable

Complete Oracle EBS migration guide for Accounts Payable on GCP. Includes BigQuery models, Cloud Composer automation, and Looker dashboards.

-

Finance Accounts Payable Analytics Accelerator | Dataplatr

Learn what accounts payable analytics is and how Dataplatr’s AP Analytics Accelerator transforms Oracle, SAP, and NetSuite AP data into real-time insights.

-

Finance Accounts Receivable Automation with Agentic ELT

Boost AR efficiency with Accounts Receivable Automation using Dataplatr’s Agentic ELT automated SQL, AR Aging models, and clean financial pipelines.

-

Finance Dataplatr Launches Oracle Financial GL Analytics Accelerator on Databricks Marketplace

Oracle Financial GL Analytics Accelerator on Databricks Marketplace, enabling faster financial reporting and real-time insights for enterprises.

-

Finance Sigma Access Control & Data Governance Best Practices

Learn how to implement Sigma access control with data governance best practices. Discover folder-level permissions, role-based access, and secure analytics.

-

Finance Empowering Financial Decision-Making using Databricks and Power BI Insights

Achieve smarter financial decision-making with Dataplatr by using the power of Databricks for data processing and Power BI.

-

Finance Unleashing Oracle EBS Financial Reporting in Google Cloud Platform & Looker

Our recent endeavor witnessed the implementation of a robust financial analytics framework on the Google Cloud Platform GCP.

-

Finance Complete Guide to implement a Data Warehouse with Microsoft Fabric

Learn how a Fabric data warehouse in Microsoft transforms analytics with scalable solutions using it for insights and data integration

-

Finance Modernize On-Prem Oracle Business Intelligence GL with Google Cloud & Looker — Part 2

This article serves as a continuation of Part 1, focusing on the technical aspects of General Ledger KPIs and Dashboards

-

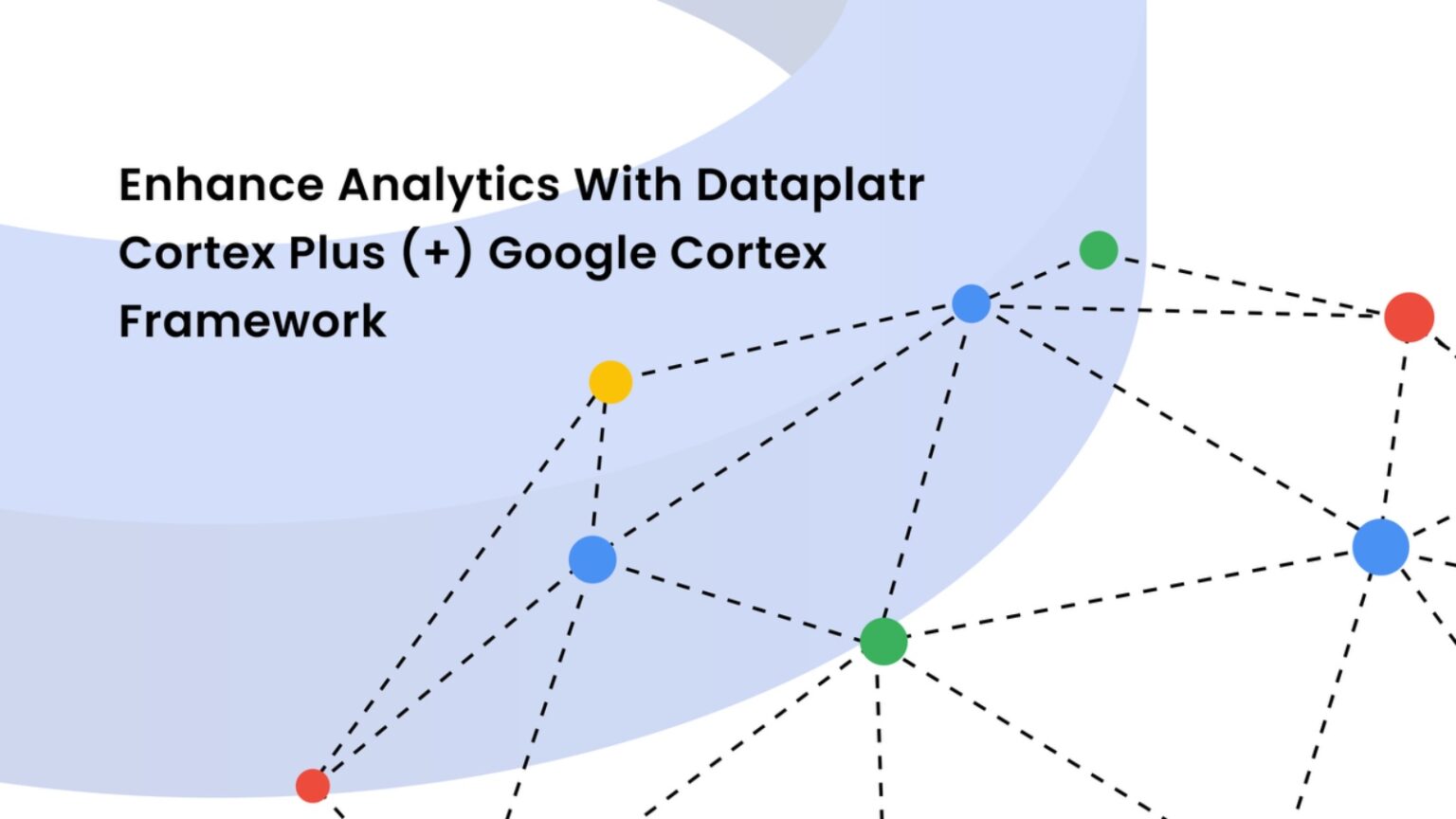

Finance Cortex+ Framework: Advanced Data Integration on Google Cloud

Discover how Dataplatr s Cortex Plus Framework, built on Google Cloud Cortex, transforms enterprise data integration, modeling, and analytics for faster insights.

-

Finance Procurement & Spend Analytics with PowerBI on Microsoft Fabric

Optimize procurement and spend analytics with advanced dashboards, KPIs, and data models using Microsoft Fabric and Power BI.

-

Finance Oracle Financial Analytics: General Ledger KPIs and Dashboards for Actionable Insights - Part 1

Discover how Oracle Financial Analytics revamp Business finance with real time insights