Learn how data analytics can improve email marketing ROI through audience segmentation, campaign optimization and insights using HubSpot and Databricks.

Data Analytics

Google ADK Context Management: Advanced Chatbot Strategies

Explore Google ADK context management techniques for chatbots. Learn sub-agents, token optimization, seamless handoffs, and multimodal AI capabilities.

Similar Blogs

-

Data Analytics Google ADK Context Management: Advanced Chatbot Strategies

Explore Google ADK context management techniques for chatbots. Learn sub-agents, token optimization, seamless handoffs, and multimodal AI capabilities.

-

Data Analytics Leveraging Data Warehouse Custom Functions in Sigma

See how Sigma wrapper functions make warehouse-based business logic easy to use, improving reporting consistency, governance, and decision-making.

-

Data Analytics GUI-Based Data Modeling in Sigma | Drag & Drop + SQL

Explore Sigma’s GUI-based data modeling approach. Build high-performance semantic layers on Snowflake, BigQuery and Redshift.

-

Data Analytics Agentic ELT for Supply Chain Analytics on Databricks

Unify supply chain analytics with Dataplatr’s Agentic ELT Accelerator. Automate PO, inventory, logistics and insights using AI-driven pipelines on Databricks.

-

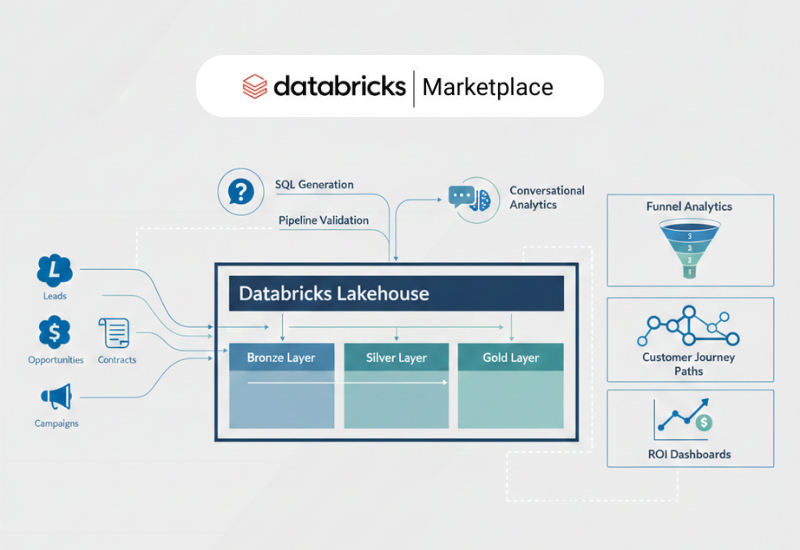

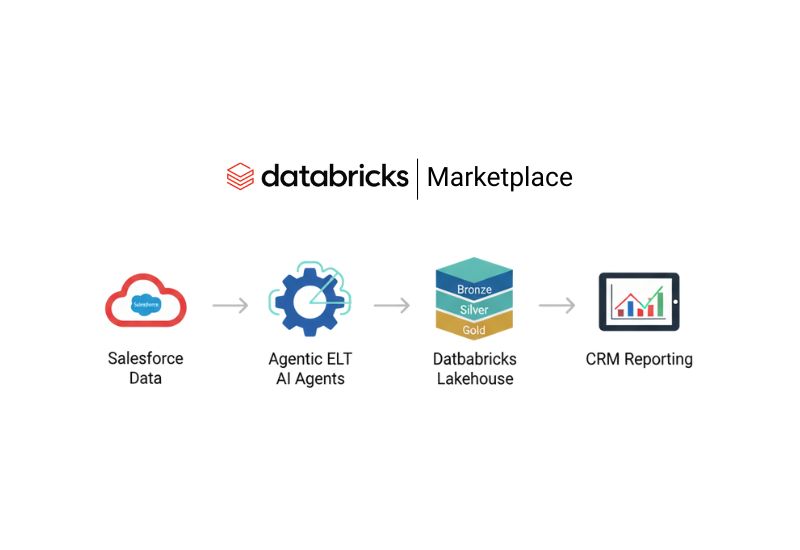

Data Analytics Agentic ELT for Enterprise Sales + Customer Journey Analytics on Salesforce

Discover how Agentic ELT transforms Salesforce data into unified customer journey analytics with AI-driven automation on Databricks.

-

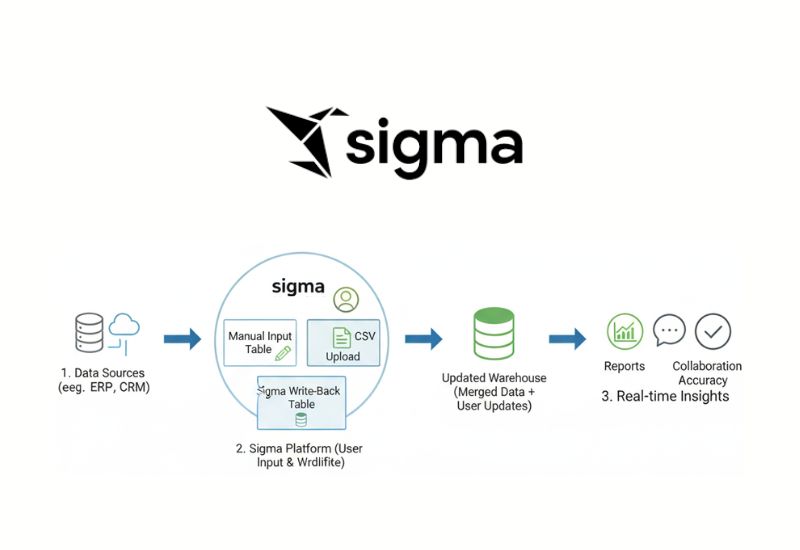

Data Analytics Sigma Input & Write-Back Tables Explained

Learn how Sigma Input & Write-Back Tables let business users enter and update data directly in Sigma, improving accuracy, collaboration, and real-time insights.

-

Data Analytics Sensitive Data Handling Framework for PII PCI Compliance

A complete framework for PII and PCI compliance, detect, classify, and mask sensitive data at scale while enabling secure analytics

-

Data Analytics AI-Driven SLA Architecture for Predictive Case Closure

Discover how AI-driven SLA Architecture helps enterprises predict delays, prevent SLA breaches, automate escalations, and improve case closure efficiency.

-

Data Analytics Predictive Analytics: What It Is, How It Works & Why It Matters for Modern Enterprises

Discover how predictive analytics works, use cases and real-world applications. Learn about predictive modeling and its role in decision-making.

-

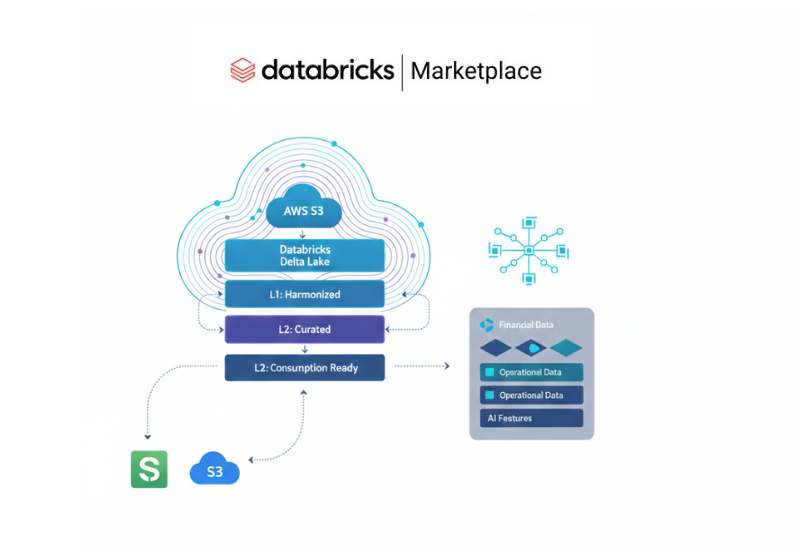

Data Analytics AI Powered Sage Intacct Data Analytics on Databricks

Learn how AI-powered Sage Intacct analytics improve financial reporting, boosts visibility, and accuracy with real-time insights and advanced forecasting.

-

Data Analytics Salesforce ELT Automation with Dataplatr Agentic ELT

Dataplatr’s Agentic ELT Accelerator makes Salesforce ELT simple. It keeps data fresh, cuts manual work, and creates clean pipelines for faster, reliable CRM reporting.

-

Data Analytics Predictive AI: The Future of Data-Driven Decision Making for Enterprises

Discover how Predictive AI helps businesses forecast trends, reduce risks, and make smarter decisions with Dataplatr’s advanced AI analytics solutions.

-

Data Analytics The Self Healing Data Pipeline: Ensuring Financial Data Integrity for AI

Automate financial data cleansing with a self healing data pipeline that fixes errors using GenAI, ensuring clean, accurate, audit-ready data for AI and reporting.

-

Data Analytics Driving Business Impact with the Sigma Data Model

See how the Sigma Data Model simplifies data use, helping businesses make better choices, improve outcomes, and drive long-term success.

-

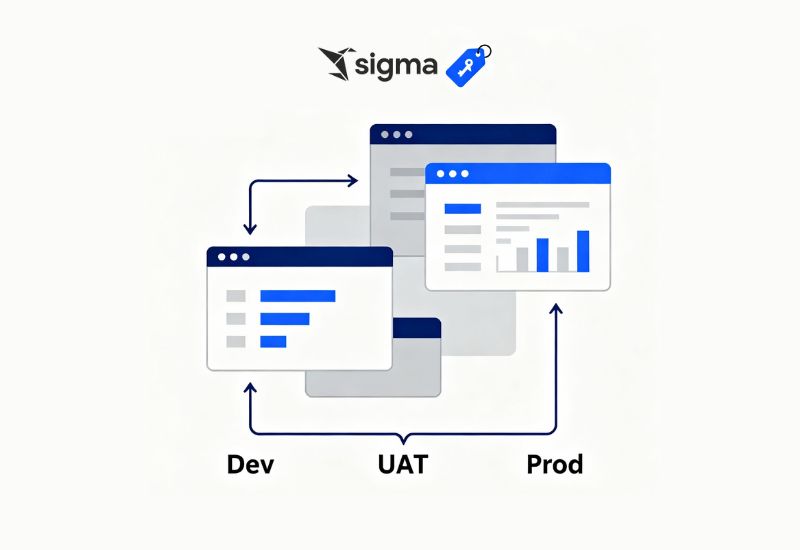

Data Analytics Sigma Version Tagging for Efficient Dashboard Management

Simplify Dev, UAT, and Prod workflows, reduce errors, and streamline dashboards with Sigma version tagging.

-

Data Analytics AI-Powered Procurement Finance & Analytics Webinar | Built on Google Cloud

Join GCP webinar to learn how AI in procurement finance and Financial Analytics simplify data integration and accelerate business performance.

-

Data Analytics Get Faster Insights with ELT Automation and Medallion Architecture

Discover how ELT Automation with ELT Medallion Architecture, Agentic AI, and Automatic Data Governance makes data easy to manage and fast to process.